Anthropic Seizes Colossus: The SpaceX Compute Deal That Rewires the AI Arms Race

A $300-megawatt pact with Elon Musk’s SpaceX hands Anthropic over 220,000 NVIDIA GPUs — and signals the next phase of AI infrastructure dominance is no longer about models. It’s about raw compute.

A Rival’s Data Center, An Unexpected Alliance

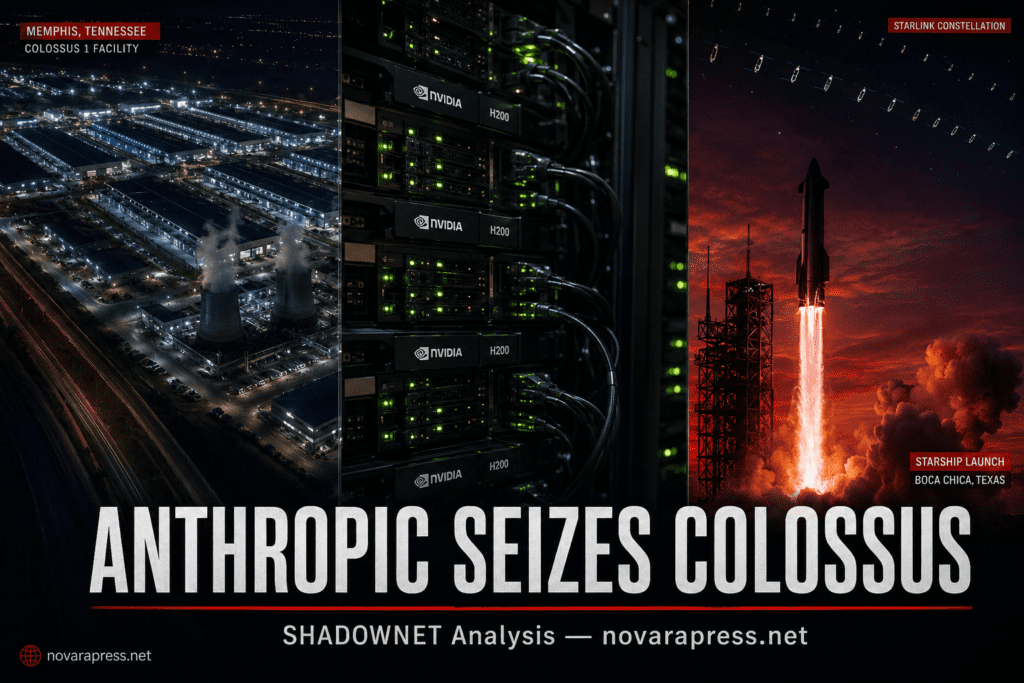

Wednesday, May 6, 2026. Anthropic announced it had signed an agreement with SpaceX to secure the entire compute capacity of Colossus 1 — the sprawling data center located in Memphis, Tennessee. The deal delivers more than 300 megawatts of new capacity and access to over 220,000 NVIDIA GPUs — including dense deployments of H100, H200, and GB200 accelerators — within a single month. The announcement landed at Anthropic’s developer conference in San Francisco, delivered by head of product Ami Vora. The effects were immediate.

For paid subscribers on Claude Pro, Max, Team, and Enterprise plans, three changes took effect the same day: Claude Code’s five-hour rate limits were doubled across the board; peak-hours throttling was permanently lifted for Pro and Max accounts; and API rate limits for Claude Opus models received a material increase, with higher per-minute token throughput across multiple tiers.

— Ami Vora, Anthropic Head of Product

The timing is almost theatrical. Elon Musk, whose xAI venture has now been absorbed into SpaceX, has publicly branded Anthropic “misanthropic and evil” — yet in a post on X following the announcement, he described himself as “impressed” with the Anthropic team and said Claude would “probably” be good. Commercial logic, it appears, overrides ideological combat.

What 220,000 GPUs Actually Means

The Colossus 1 facility in Memphis is not a standard hyperscale data center. It was engineered by xAI as a density-first, GPU-forward installation — purpose-built for the specific demands of large language model training and inference. NVIDIA’s H100 and H200 chips dominate the floor, with next-generation GB200 accelerators layered into the stack. Three hundred megawatts is not a footnote — it is roughly the electricity demand of a mid-sized American city, redirected entirely toward AI computation.

| Partnership | Capacity | Timeline | Status |

|---|---|---|---|

| SpaceX — Colossus 1 | 300+ MW / 220,000+ GPUs | Within 1 month | ✓ LIVE |

| Amazon (AWS) | Up to 5 GW (1 GW by Q4 2026) | End of 2026 | ⏳ RAMPING |

| Google + Broadcom | 5 GW (TPUs + custom silicon) | Online 2027 | ⏳ PENDING |

| Microsoft + NVIDIA | $30 billion Azure capacity | Strategic / ongoing | ⏳ ACTIVE |

| Fluidstack | $50 billion U.S. AI infrastructure | Multi-year | ⏳ ACTIVE |

The Colossus deal stands apart from the rest of Anthropic’s compute stack precisely because it is available now. While the Amazon, Google, and Microsoft agreements represent future capacity that comes online in 2026 and 2027, SpaceX’s Colossus 1 delivers usable GPU-hours within weeks. In a market where competitors are measured in inference throughput per dollar, that immediacy matters enormously.

The Rate Limit Problem — And Why It Was Urgent

Claude Code’s usage constraints had become a pressure point. Reports of developers hitting the five-hour rolling cap circulated widely, with coverage in the BBC and across developer communities throughout early 2026. In March, Anthropic ran an off-peak usage promotion as a temporary relief valve — a signal that the supply-demand gap was real and growing. The SpaceX deal directly addresses that gap.

— Ami Vora, Anthropic Developer Conference, SF

The three immediate changes announced today translate into concrete operational improvements for the developer community. Doubling the five-hour rate window means practitioners can run longer, uninterrupted agentic coding sessions. Removing peak-hour throttling eliminates the frustration of degraded performance during high-demand windows. And raising Opus API ceilings directly benefits enterprise teams running production pipelines on Anthropic’s most capable models.

For platform architects, the downstream effect is significant: higher per-minute token throughput reduces the need for aggressive request batching, complex retry logic, and session-splitting workarounds that teams had been forced to engineer around previous constraints.

Beyond Memphis: Orbit, IPO, and the Infrastructure Arms Race

The Colossus deal carries strategic dimensions that extend well beyond rate limits. SpaceX recently filed paperwork with the FCC to launch up to one million satellites — a constellation explicitly framed around orbital data center infrastructure. The Anthropic announcement includes language signaling interest in partnering with SpaceX on “multiple gigawatts of orbital AI compute capacity.” No agreement on orbital infrastructure has been signed, but the framing is deliberate. Satellite-based compute would eliminate geographic constraints on AI infrastructure deployment and create entirely new latency and sovereignty dynamics for global enterprise customers.

The timing of the deal is also inseparable from SpaceX’s financial position. The company is targeting an IPO widely expected to be the largest in corporate history, anticipated later in 2026. Securing a tier-one AI company as a full-capacity compute tenant at Colossus 1 dramatically strengthens SpaceX’s pitch as an AI infrastructure provider — not merely a launch company or a satellite operator.

| Dimension | Anthropic Position | Competitive Signal |

|---|---|---|

| Near-term GPU access | 220,000+ GPUs via Colossus 1 | Immediate advantage |

| Long-range capacity | 10+ GW across 4 major partners | Multi-year runway secured |

| Geographic expansion | Amazon adds EU + Asia inference | Data residency compliance push |

| Orbital compute | Expressed interest, no deal signed | Exploratory — 2027+ horizon |

Anthropic has also been deliberate about the political framing of its infrastructure strategy. The company has stated it will partner only with what it describes as “democratic countries whose legal and regulatory frameworks can support investments of the relevant scale” — language that functions simultaneously as a values statement and a market-access positioning move against Chinese AI competitors operating under different regulatory architectures.

Four Trajectories: What This Deal Unlocks

“`

Anthropic’s stacked compute partnerships enable sustained rate-limit relief, accelerating enterprise adoption of Claude Code and Opus. Developer retention improves, API revenue grows, and orbital compute ambitions attract a new class of sovereign and defense clients ahead of a potential IPO.

The ideological gap between Musk and Anthropic’s leadership generates reputational friction among Anthropic’s safety-focused enterprise clients. The partnership holds commercially but requires sustained narrative management. Orbital compute ambitions stall amid regulatory complexity and competing SpaceX priorities.

Over-reliance on any single near-term provider creates a structural risk. If Colossus 1 availability shifts due to SpaceX’s own AI ambitions — particularly post-IPO — Anthropic faces a capacity cliff before Amazon and Google partnerships come fully online. The gap between 2026 and 2027 capacity timelines becomes a window of vulnerability.

If orbital compute materializes, Anthropic and SpaceX jointly redefine AI infrastructure architecture. Terrestrial latency constraints dissolve for a class of global enterprise and government clients. The competitive advantage is no longer model capability alone — it is compute sovereignty and geographic ubiquity. Rivals without orbital capacity face structural exclusion from entire market segments.

“`

The Model War Is Over. The Infrastructure War Has Begun.

The frontier AI competition was, for most of the past three years, understood as a model capabilities race: which lab trains better on more data, which architecture scales more efficiently, which safety approach survives contact with real-world deployment. The Anthropic-SpaceX deal signals a structural transition in what actually determines AI market position.

Model performance gaps between frontier labs have narrowed faster than most analysts expected. Claude 4, GPT-5, and Gemini Ultra compete at close range across benchmarks. What differentiates the next phase is not a new training breakthrough — it is the ability to serve demand at scale, without throttling, without geographic restriction, and without the latency penalties that constrain enterprise deployment.

Anthropic’s compute stack — now spanning SpaceX, Amazon, Google, Microsoft, and Fluidstack — represents a deliberate attempt to solve this problem before competitors lock in the same advantage. The company trains on AWS Trainium, Google TPUs, and NVIDIA hardware simultaneously, maintaining hardware diversity that prevents any single chip supplier from becoming a strategic bottleneck.

— SHADOWNET Analysis

The orbital compute dimension is speculative but should not be dismissed. SpaceX’s million-satellite FCC filing represents an infrastructure buildout at a scale that would make terrestrial data center geography irrelevant for many use cases. Latency from low Earth orbit for AI inference is a solvable engineering problem. Regulatory permitting, power delivery, and thermal management in orbit are not trivial — but neither are they unsolvable for an organization that has already demonstrated reusable orbital launch capability. If this partnership produces even a fraction of its orbital compute ambitions, it rewrites the infrastructure map entirely.

For now, the practical signal is clear: Anthropic has secured the near-term compute supply needed to meet growing demand from Claude Code’s developer base, and has positioned itself with a supply stack that extends capacity through 2027 and beyond. The rate limits doubled today are a direct consequence of that strategy. The question for competitors is whether they can match this supply architecture — or whether Anthropic’s compute stack becomes, over the next 24 months, the defining structural moat in the frontier AI market.

The Anthropic-SpaceX deal is not a technology story. It is a logistics story — and in AI, logistics is now destiny. The company that controls the GPU hours controls the product roadmap, the pricing, and ultimately the market. Anthropic just secured a significant position in that control stack. The race that matters now runs through Memphis, Tennessee and, eventually, low Earth orbit.

Anthropic

SpaceX

Claude Code

Colossus 1

AI Infrastructure

GPU Compute

Elon Musk

Rate Limits

- Anthropic — “Higher usage limits for Claude and a compute deal with SpaceX” — May 6, 2026 — anthropic.com/news/higher-limits-spacex

- Axios — “Anthropic will get compute capacity from Elon Musk’s SpaceX” — May 6, 2026

- The Next Web — “Anthropic raises Claude Code and Opus API rate limits, citing SpaceX Colossus 1 deal” — May 6, 2026

- CoinDesk — “Anthropic signs Elon Musk’s SpaceX for Colossus 1 compute ahead of June IPO” — May 6, 2026

- Engadget — “Anthropic is doubling Claude Code rate limits after deal with SpaceX” — May 6, 2026

- Yahoo Finance / The Information — Anthropic-SpaceX compute partnership coverage — May 6, 2026

- SpaceX FCC Filing — Orbital satellite constellation for data center infrastructure — 2026