NOVARAPRESS ANALYSIS | April 1, 2026 | AI & Technology

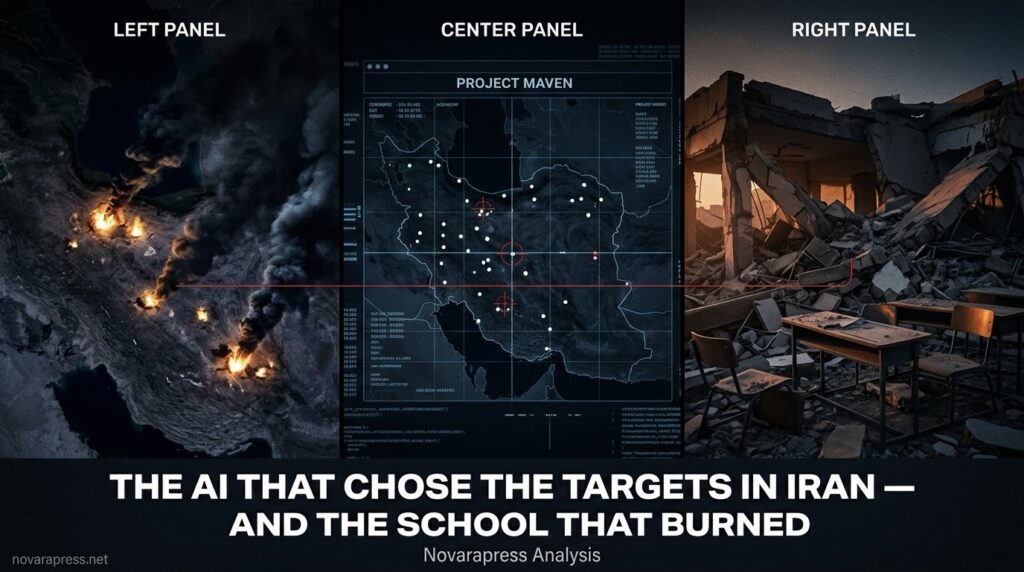

The AI That Chose the Targets in Iran — And the School That Burned

On February 28, the US military struck 1,000 targets in Iran within the first 24 hours of the war. No military in history has ever moved that fast. The reason is not more soldiers, more pilots, or more intelligence analysts. The reason is artificial intelligence — and the Iran war has become the most consequential test of AI-powered warfare ever conducted.

It has also produced its most damning failure.

Project Maven: The Machine Behind the War

At the center of the US targeting operation is a system called Project Maven — a Pentagon AI program launched in 2017 and now run by the data analytics firm Palantir. Maven uses AI algorithms to analyze satellite imagery, intelligence feeds, and battlefield data to identify potential military targets and prioritize them for strikes.

In the Iran war, Maven has been combined with Claude — the AI model built by Anthropic — to help military planners sort targeting data and make faster decisions. One researcher described it as “Google Earth for war: a map with white dots, infused with information like elevation, coordinates, and whether it is friendly or enemy.”

The results in raw operational terms have been extraordinary. The US military has now struck more than 11,000 targets in Iran in 32 days — a pace of targeting that would have been impossible without AI-accelerated decision-making. CENTCOM chief Admiral Brad Cooper confirmed the military is “leveraging a variety of advanced AI tools” that can “turn processes that used to take hours and sometimes even days into seconds.”

The School in Minab

On February 28, a US Tomahawk cruise missile struck Shajareh Tayyebeh girls’ school in Minab, southern Iran. At least 168 people were killed — most of them children.

The school was adjacent to an Iranian Revolutionary Guard base. It appeared on the US target list. The missile hit it.

The Pentagon initially blamed Iran for the strike. It later announced an investigation. The Washington Post confirmed the school was on a US target list. Senate Democrats wrote to Defense Secretary Pete Hegseth demanding answers about AI’s role in the targeting decision.

The question that investigation must answer is not simply who gave the final order. The deeper question is: what role did the AI play in placing a girls’ school on a strike list?

The Anthropic Rupture

One day before the war began — on February 27 — the Trump administration declared Anthropic a “supply chain risk” and effectively blacklisted the company from US government contracts.

The reason: Anthropic had refused to allow its AI to be used for fully autonomous weapons systems and mass domestic surveillance. The Pentagon said it should be able to use the technology for all lawful purposes. Anthropic drew a line. The administration responded by treating one of America’s most valuable AI companies as if it were a Chinese military contractor.

The Pentagon spokeswoman’s statement was blunt: “America’s warfighters will never be held hostage by unelected tech executives and Silicon Valley ideology. We will decide, we will dominate, and we will win.”

The irony is sharp. The same AI tools that the US military is using to prosecute the war in Iran were built by a company Washington just declared a national security threat — because that company refused to remove human oversight from decisions about who lives and who dies.

What AI Can and Cannot Do in War

The debate over AI in warfare is often framed as a question about autonomous killer robots. The reality is more subtle — and in some ways more troubling.

Current military AI systems are not pulling triggers autonomously. Humans retain final authority over strike decisions. What AI is doing is something more foundational: it is shaping which targets appear on the list in the first place, how they are prioritized, and how fast commanders are expected to decide.

When an AI system processes 50,000 intelligence feeds and presents a ranked list of targets to a commander who has 30 seconds to approve or reject each one, the human is technically making the decision. But the AI has already made most of the choices that matter.

Experts call this “automation bias” — the well-documented tendency of humans to defer to algorithmic recommendations, especially under time pressure and in high-stakes environments. The machine doesn’t pull the trigger. It just makes it very hard to say no.

The Accountability Gap

When the Minab school was struck, the initial response from Washington was to deny responsibility. When that became untenable, the response shifted to “we are investigating.” No one has been held accountable. No targeting protocol has been publicly changed.

This pattern is not unique to the Iran war. In Gaza, AI-assisted targeting systems produced civilian casualty rates that drew global condemnation. In Yemen, algorithmic strike lists contributed to attacks on weddings, hospitals, and markets. In each case, the presence of AI in the decision chain made accountability harder to assign — not easier.

A UN resolution passed in December 2025 calls for multilateral discussions on AI in warfare. A three-day meeting is scheduled for June 2026. By the time that meeting occurs, the US military will have struck tens of thousands of targets in Iran using systems that no international body has the authority to inspect, regulate, or hold accountable.

The Bottom Line

The Iran war is not just a geopolitical crisis. It is a live demonstration of what happens when the most powerful military in history deploys AI targeting at industrial scale — with no established legal framework, no independent oversight, and no functioning accountability mechanism.

The IRGC’s decision to threaten American tech companies today is, in part, a response to this reality. Tehran has concluded that Silicon Valley is not a neutral commercial actor. It is part of the targeting chain.

Whether that conclusion is legally or morally justified is a question for international lawyers. But as a description of how the technology actually functions in this war — it is not wrong.

— Novarapress Analysis | novarapress.net